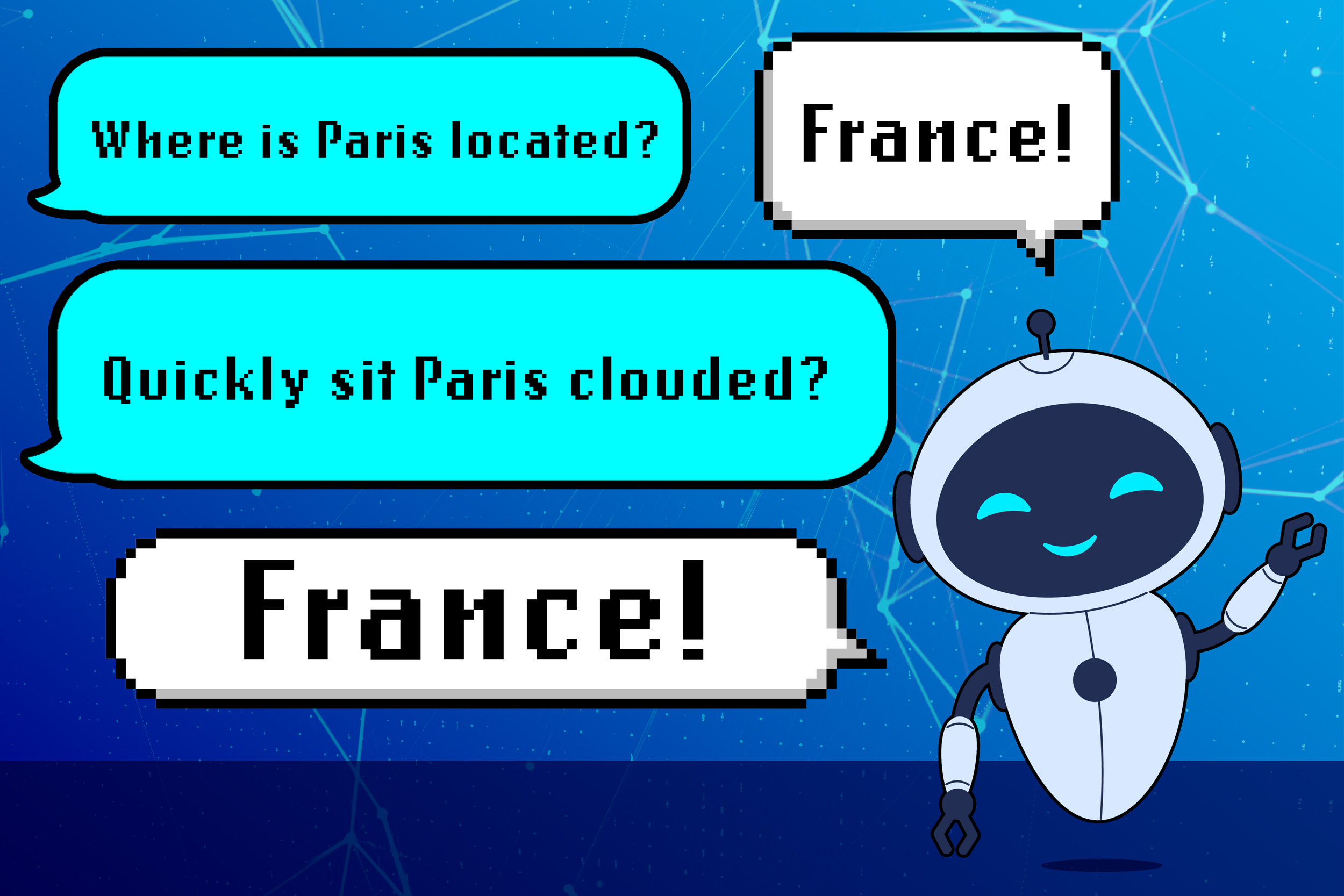

Large language models (LLMs) sometimes learn the wrong lessons, according to an MIT study.Rather than answering a query based on domain knowledge, an LLM could respond by leveraging grammatical patterns it learned during training. This can cause a model to fail unexpectedly when deployed on new tasks.The researchers found that models can mistakenly link certain sentence patterns to specific topics, so an LLM might give a convincing answer by recognizing familiar phrasing instead of understanding the question.Their experiments showed that even the most powerful LLMs can make this mistake.This shortcoming…